As of April 2026, self-driving cars have moved from science fiction to a daily reality. At the heart of this change is Computer Vision (CV). There are many exciting Computer Vision Applications in Autonomous Vehicle Technology that continue to drive progress in this field. This is a branch of artificial intelligence that teaches computers to see and understand the world. For a self-driving car, “seeing” is the most important step. By using cameras and smart software, cars can now spot objects, track movement, and drive through busy cities just as well as—or even better than—humans.

The stakes are very high. Human error causes over 90% of road accidents. Computer vision offers a safer future because machines don’t get tired or distracted. This article explores how cars use this technology to drive themselves and the challenges engineers are solving in 2026.

Computer vision starts with gathering data. Modern self-driving cars use high-resolution cameras to get a 360-degree view. These cameras act as the “eyes” of the car, capturing colors and textures. However, cameras are often paired with other tools like LiDAR and Radar. This team-up is called Sensor Fusion, and it helps the car create a perfect map of its surroundings.

While Tesla mostly relies on cameras alone, other leaders like Waymo use a mix of sensors. In 2026, experts agree that cameras are essential. They are the only tools that can read street signs, see the color of traffic lights, and understand the body language of people on the sidewalk. Radar and LiDAR simply cannot do those specific tasks.

Once a camera takes a picture, the car must figure out what it is looking at. First, it detects the object (finding where it is). Then, it classifies it (naming what it is). By 2026, cars can tell the difference between a stray dog, a cyclist, or an ambulance.

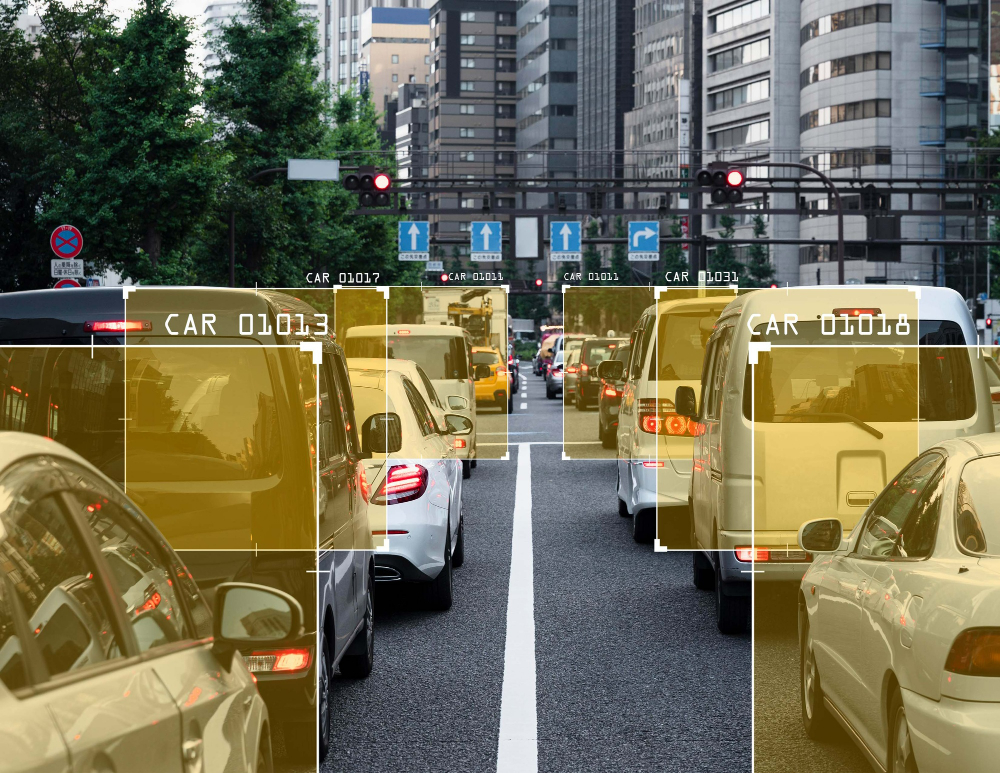

Speed is the most important part. A car going 65 mph moves nearly 100 feet every second. Modern systems process images in milliseconds. They draw “bounding boxes” around everything they see. This allows the car’s brain to calculate the distance and speed of every threat instantly. Today, these systems are over 99% accurate in good weather.

“Semantic Segmentation” is a fancy term for a simple job: labeling every single dot (pixel) in an image. This helps the car know exactly where the road ends and the sidewalk begins. It can even tell the difference between a puddle and solid ground.

This is very helpful when lane lines are missing or faded. In 2026, cars use “Panoptic Segmentation.” This doesn’t just see a group of “cars” as one big blob. It recognizes every individual vehicle and tracks them separately. This ensures the car knows exactly how fast each neighbor is moving.

Staying in the lane is a basic but vital task. Using smart algorithms, the car finds lane markers even when it’s dark or raining. This data goes to the “Path Planning” brain, which decides the safest way to move forward.

The big challenge today isn’t driving on perfect highways; it’s the “edge cases.” This includes faded lines on country roads or messy construction zones. Modern cars use “contextual reasoning.” This means if the lines are gone, the car follows the tire tracks of the vehicle in front or looks at the curb to figure out where the lane should be.

Traffic Sign Recognition (TSR) helps cars follow the law. This is more than just reading a speed limit. It means understanding signs like “No Left Turn” or a “Stop” sign held by a crossing guard.

In 2026, these systems are very localized. A car in Germany understands the Autobahn, while a car in Japan can read Japanese characters on road signs. Studies show that this technology reduces speeding by up to 85%. A computer simply doesn’t “miss” a sign like a distracted human driver might.

The hardest part of driving is predicting human behavior. It isn’t enough to see a person on the sidewalk; the car needs to know if they are about to jump into the street. Cars now use “Pose Estimation” to track human “skeletons.” By looking at the tilt of a head, the AI can tell if a person is looking at the car or their phone.

Cars can also understand hand signals. If a police officer or a construction worker signals for the car to stop, the cameras catch the motion and understand it. This “social intelligence” is key for city driving, where drivers and walkers communicate with gestures every day.

Even in 2026, computer vision isn’t perfect. Bad weather is the biggest problem. Heavy rain, thick fog, and snow can block a camera’s view. If mud or ice covers the lens, the car essentially loses its sight in that direction.

Another issue is “Occlusion”—when one thing hides another. If a child runs out from behind a parked truck, the car has very little time to react. To solve this, cars use “probabilistic mapping.” This means the car assumes there might be a hidden danger in risky spots, like near a school bus. Engineers are also working to stop “Adversarial Attacks,” where people use stickers to trick the car into misreading a sign.

To get smart enough to drive, cars need billions of miles of practice. But waiting for accidents to happen in the real world is too dangerous. Instead, cars learn in “Digital Twins.” These are hyper-realistic video games where cars can practice scary scenarios millions of times without any risk.

This “synthetic data” lets engineers test rare events, like a deer jumping into the road at night. The car learns from these fake miles and then applies that knowledge to the real world. Today, over 80% of a car’s training happens in these simulations. This ensures the AI is ready for things a human driver might only see once in a lifetime.

Computer vision is the technology that lets cars understand our messy world. By moving from simple object naming to predicting human intent, these systems have almost caught up to human sight.

In short, the “eyes” of self-driving cars are getting faster and smarter every day. While there are still hurdles like bad weather, the road to full autonomy is clearer than ever.