Artificial intelligence has reshaped industries from healthcare to entertainment. At the core of this shift is the neural network—a computational model inspired by the human brain. These networks drive today’s most impressive feats, such as self-driving cars, medical breakthroughs, and generative AI like chatbots. The Art and Science of Neural Network Optimization is an essential topic for understanding how these technologies achieve such remarkable results.

However, success requires more than just stacking layers of digital neurons. A model’s performance depends on its architecture, training methods, and data quality. Poorly designed networks often struggle with slow learning, “overfitting” (memorizing instead of learning), or high computing costs. Consequently, optimizing these architectures has become the primary frontier of AI research.

The journey of neural networks began in the 1950s with the perceptron. While groundbreaking, it could only solve simple, linear problems. The 1980s brought backpropagation, a method that allowed multi-layer networks to learn effectively.

The real “Deep Learning Revolution” arrived in the 2010s. The combination of massive datasets and powerful Graphics Processing Units (GPUs) allowed deep networks to finally outperform traditional software across almost every domain.

A neural network is essentially a series of mathematical filters organized into layers:

Input Layers: These receive raw data (like pixels or text).

Hidden Layers: These extract features and find hidden patterns.

Output Layers: These deliver the final prediction or decision.

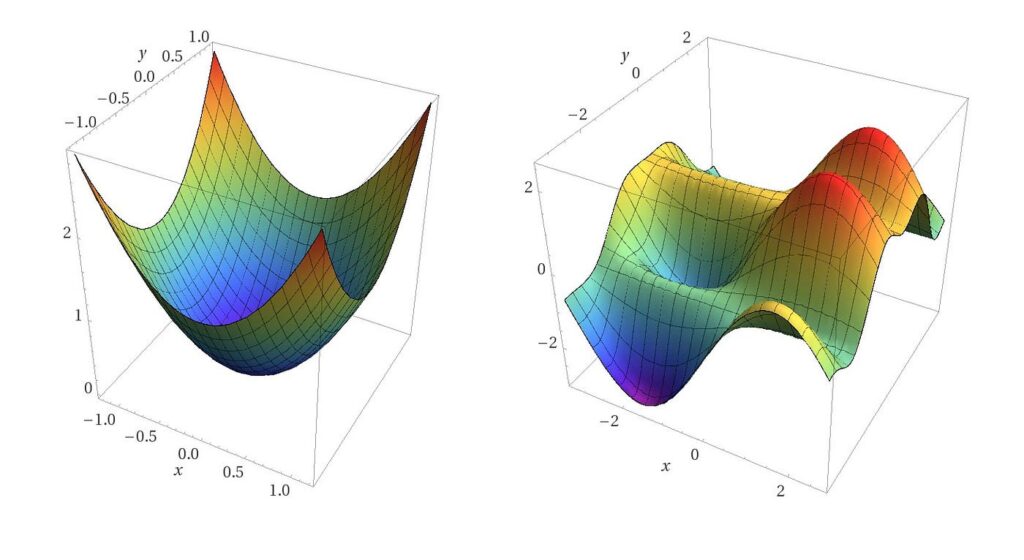

To learn, the network uses Weights and Biases. Weights act like “knobs” that determine how much importance to give a specific input. To make the model smart enough to understand complex, non-linear relationships, we use Activation Functions like ReLU (Rectified Linear Unit) or Sigmoid.

Different problems require different designs:

Convolutional Neural Networks (CNNs): The gold standard for vision. They excel at identifying objects in photos or scanning medical X-rays.

Recurrent Neural Networks (RNNs) & LSTMs: Designed for sequences, such as speech or stock market trends, where the order of data matters.

Transformers: The technology behind modern AI assistants. They use “self-attention” to process entire sentences at once, capturing context much better than older models.

Training is a cycle of trial and error:

Forward Propagation: The model makes a guess.

Loss Function: We calculate how far off the guess was from the truth.

Backpropagation: We work backward through the network to see which “knobs” (weights) caused the error.

Optimization: Algorithms like Adam or SGD (Stochastic Gradient Descent) nudge the weights in the right direction to reduce the error next time.

A common pitfall is Overfitting, where a model becomes so focused on the training data that it fails in the real world. To combat this, engineers use:

Dropout: Randomly “turning off” neurons during training so the network doesn’t become too reliant on specific ones.

Data Augmentation: Creating “new” data by flipping or rotating images to teach the model variety.

Batch Normalization: Smoothing out data as it flows through the network to speed up training.

As models grow larger, we face new hurdles. Training a massive AI requires immense electricity, raising environmental concerns. To address this, researchers are turning to Model Compression techniques like:

Pruning: Cutting away unnecessary parts of the network.

Quantization: Reducing the numerical precision of the data to save memory.

Furthermore, Explainability is becoming vital. We need to move away from “black box” AI and toward systems that can explain why they made a specific decision, especially in fields like law or medicine.

Neural networks are the heartbeat of modern technology. From simple code to the vast “Transformer” models of today, they have become incredibly sophisticated. However, their true power isn’t just in their size—it’s in the careful optimization of their layers, the quality of their data, and the ethical guardrails we put in place. As we look toward the future, the focus will shift from “bigger is better” to “smarter and more efficient,” ensuring AI remains a helpful and sustainable tool for everyone.

Active Voice: Switched from passive to active voice where possible (e.g., “The network uses…” instead of “Weights are used by…”).

Analogies: Added relatable concepts like “knobs” for weights and “trial and error” for training.

Varied Sentence Length: Mixed short, punchy sentences with longer descriptive ones to create a better rhythm.

Formatting: Used bold terms and clear bullet points to make the text scannable for the reader.