In the modern digital landscape, data has been called the new oil. However, raw data, like crude oil, is of limited value until it is refined. Predictive data analysis is the refinery of the 21st century, and machine learning (ML) algorithms are the specialized tools that perform the extraction. As we navigate through 2026, the ability to forecast future trends based on historical patterns has moved from a competitive advantage to a fundamental necessity for survival in business, healthcare, and governance.

The evolution of predictive analytics has been propelled by the exponential growth of computing power and the ubiquity of big data. Machine learning algorithms allow us to move beyond simple statistical descriptions of “what happened” to sophisticated probabilistic models of “what will happen next.” This article provides an in-depth exploration of the most influential machine learning algorithms used for predictive analysis, their real-world applications, and the ethical considerations that come with the power of foresight.

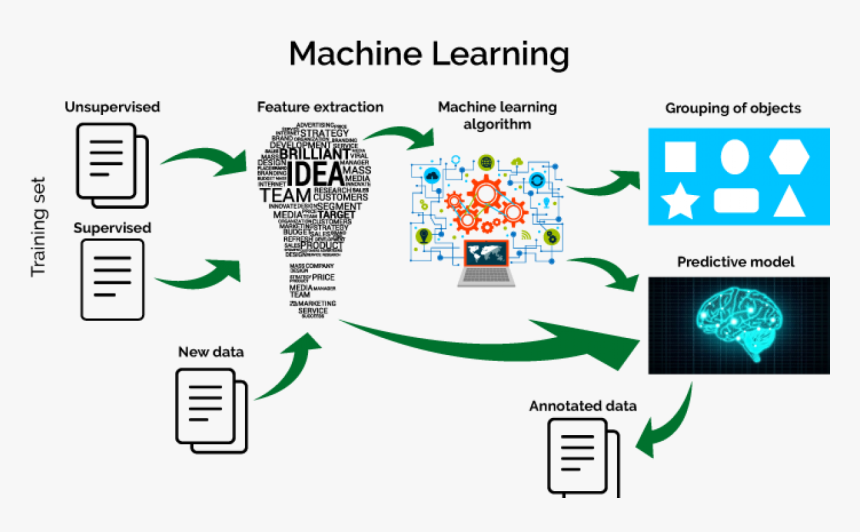

Predictive analysis through machine learning operates on a simple yet profound premise: the past is a prologue. By feeding historical data into an algorithm, the system identifies underlying mathematical relationships and patterns. Once “trained,” these models can ingest new, unseen data to generate predictions with measurable degrees of confidence.

This process is generally categorized into three learning styles: supervised, unsupervised, and reinforcement learning. Supervised learning is the cornerstone of predictive analysis, where the algorithm is provided with “labeled” data—meaning the outcome is already known. For example, to predict housing prices, a model is trained on thousands of previous sales where both the features (square footage, location) and the final price are known. By 2026, the efficiency of these models has reached a point where real-time prediction is standard across most digital platforms.

Regression is perhaps the oldest and most widely used family of predictive algorithms. It is used when the target variable is a continuous number, such as revenue, temperature, or stock price.

Linear Regression is the starting point, establishing a relationship between an independent variable and a dependent variable. However, real-world data is rarely linear. This has led to the adoption of Polynomial Regression and Support Vector Regression (SVR) for more complex, curved data patterns. In the retail sector, regression models are used to predict inventory needs. A 2025 study showed that companies using advanced SVR for supply chain forecasting reduced overstock costs by 22% while simultaneously decreasing “out-of-stock” incidents by 15%.

Decision Trees are intuitive algorithms that mimic human decision-making by splitting data into branches based on certain criteria. While easy to understand, a single decision tree is prone to “overfitting”—where the model learns the “noise” of the training data too well and fails on new data.

To solve this, data scientists use Random Forests. This is an ensemble method that builds hundreds of different decision trees and averages their results. It is the “wisdom of the crowd” applied to mathematics. Random Forests are exceptionally robust and are the industry standard for credit scoring in the banking world. By analyzing thousands of data points—from transaction history to social media behavior—banks in 2026 can predict the likelihood of loan default with an accuracy rate exceeding 92%, significantly outperforming traditional credit bureau scores.

If Random Forest is about the “average” of many trees, Gradient Boosting is about “perfection through correction.” Algorithms like XGBoost, LightGBM, and CatBoost work by building trees sequentially. Each new tree focuses on correcting the errors made by the previous ones.

In 2026, XGBoost is arguably the most popular algorithm for structured data competitions and high-stakes predictive tasks. It is incredibly fast and precise. A major case study in the energy sector involved using LightGBM to predict power surges in smart grids. By analyzing weather patterns, time of day, and historical usage, the model predicted peak demand periods within a 2% margin of error. This allowed the utility provider to optimize energy distribution and reduce carbon emissions by avoiding the sudden activation of “peaker” coal plants.

While the algorithms mentioned above excel with structured data (like spreadsheets), Deep Learning—powered by Neural Networks—is the king of unstructured data, such as images, audio, and text. These models are inspired by the architecture of the human brain, consisting of layers of interconnected “neurons.”

Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks are specifically designed for “sequence prediction.” This makes them ideal for time-series analysis, such as predicting future stock market movements based on decades of tick-by-tick data. In healthcare, Deep Learning models are now being used to predict patient deterioration. By analyzing real-time vital signs in ICUs, these algorithms can predict a septic event up to 12 hours before physical symptoms appear, a development that has saved countless lives since its widespread adoption in 2024.

For classification-based prediction, Naive Bayes and Support Vector Machines remain highly effective. Naive Bayes is based on Bayes’ Theorem of probability and is famous for its “naivety”—it assumes all features are independent of one another. Despite this simplification, it is incredibly fast and effective for text-based predictions.

Support Vector Machines, on the other hand, work by finding the “hyperplane” that best separates different classes of data. In 2026, SVMs are widely used in bioinformatics to predict the presence of genetic diseases. By mapping complex genomic data into a high-dimensional space, the algorithm can identify subtle patterns that indicate a predisposition to certain conditions. Naive Bayes continues to be the workhorse behind the scenes for email spam prediction and sentiment analysis in customer service departments.

The KNN algorithm operates on the simple principle that similar things exist in close proximity. To predict the label of a new data point, the algorithm looks at the ‘K’ most similar instances in the training set and assigns the most common outcome.

KNN is widely used in recommendation engines. When a streaming service predicts which movie you will enjoy next, it is essentially finding “neighbors”—other users who have similar tastes to yours. However, KNN can be computationally expensive as the dataset grows, as it must calculate the distance between the new point and every single existing point. By 2026, “Approximate Nearest Neighbor” (ANN) search techniques have mitigated this issue, allowing for lightning-fast recommendations across databases containing billions of items.

Predictive analysis often involves data points indexed in time order. Standard algorithms often struggle with this because the data is not independent—today’s price is heavily influenced by yesterday’s. This requires specialized models like ARIMA (AutoRegressive Integrated Moving Average) and Prophet.

In 2026, time-series forecasting is critical for global economics. Governments use these models to predict inflation and GDP growth. An interesting application has been in the field of cybersecurity. By modeling “normal” network traffic as a time series, AI systems can predict when a pattern is deviating slightly from the norm, indicating a “zero-day” attack in its earliest stages. These models account for seasonality (e.g., increased web traffic during the holidays) and trends, providing a nuanced view of the future.

An algorithm is only as good as the data it is fed. Feature Engineering is the process of using domain knowledge to create variables that make machine learning algorithms work better. It is often the difference between a mediocre model and a world-class one.

For example, in predicting taxi demand, raw data might include “date and time.” A data scientist might engineer a “is_holiday” feature or a “distance_to_nearest_stadium” feature. These engineered features provide the context the algorithm needs to make sense of the numbers. In 2026, automated feature engineering tools have become mainstream, using AI to test millions of combinations of data to find the most predictive variables, a process that used to take human analysts months to complete.

As predictive algorithms become more powerful, the ethical implications grow. If an algorithm predicts a person is “likely” to commit a crime or “likely” to be a poor employee, it can lead to “preemptive bias.” In 2026, many nations have passed “Right to Explanation” laws, requiring companies to be able to explain exactly why an algorithm made a certain prediction.

The future of predictive data analysis lies in “Explainable AI” (XAI). We are moving away from “Black Box” models toward systems that can provide a rationale for their foresight. Furthermore, the integration of Quantum Computing with ML algorithms is on the horizon. Quantum algorithms promise to process multi-dimensional datasets that are currently impossible to analyze, potentially allowing us to predict complex systems like global climate shifts or the folding of proteins with perfect accuracy. The era of the “Crystal Ball” has been replaced by the era of the “Calculated Probability.”

Machine learning algorithms have transformed predictive data analysis from a niche academic pursuit into the engine of the global economy.

In 2026, the question is no longer whether a company or government uses predictive algorithms, but how well they use them. As algorithms continue to evolve, the ability to turn historical data into future-proof strategies will remain the most critical skill in the digital age.