In the landscape of Artificial Intelligence, the ability to make sequential decisions under uncertainty is the hallmark of true intelligence. Among different AI strategies, Reinforcement Learning Methods for Decision Making Systems have drawn special attention for their unique approach. While supervised learning excels at pattern recognition and unsupervised learning at structure discovery, it is Reinforcement Learning (RL) that tackles the fundamental problem of interaction. RL is the computational approach to learning from action, where an “agent” learns to behave in an environment by performing actions and seeing the results.

As we navigate through 2026, RL has transitioned from winning board games like Go to managing real-world complexities. From optimizing the power grids of smart cities to personalizing medical treatment plans, RL methods are the engines behind modern decision-making systems. This article provides a comprehensive deep dive into the methods, algorithms, and applications of reinforcement learning, exploring how these systems learn to navigate the delicate balance between immediate gratification and long-term success.

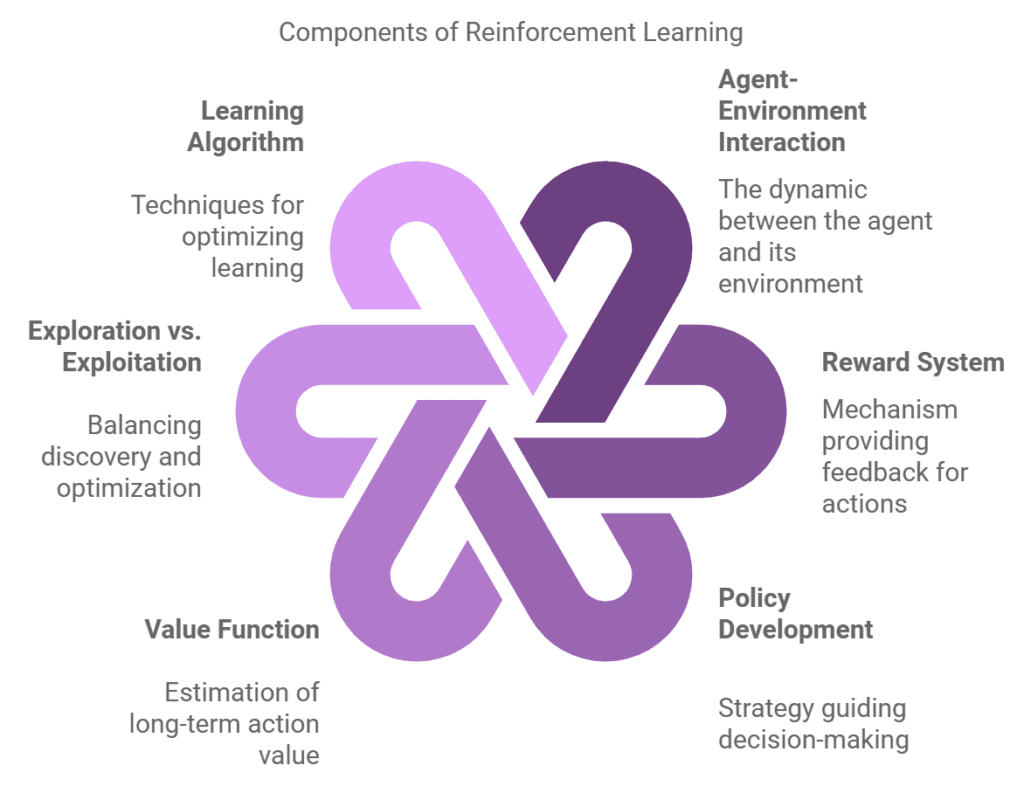

At the heart of every RL system lies a mathematical framework known as the Markov Decision Process (MDP). To understand how an agent makes a decision, we must first understand the world it lives in. An MDP is defined by four primary components: States ($S$), Actions ($A$), Transition Probabilities ($P$), and Rewards ($R$). Reinforcement machine learning technique for optimal results outline diagram

The core philosophy of RL is the Reward Hypothesis: all goals can be described by the maximization of expected cumulative reward. Unlike traditional programming, where a developer writes “if-then” rules, the RL designer defines what to achieve through a reward function, and the agent discovers how to achieve it. In 2026, the challenge has shifted from simple reward maximization to “Reward Engineering”—ensuring that the agent doesn’t find “loopholes” in the rules to gain points without actually solving the problem.

Value-based methods are built on the idea of estimating how “good” it is to be in a certain state. The most famous of these is Q-Learning. In this method, the agent maintains a “Q-Table” or a function that stores the expected long-term return for taking a specific action in a specific state.

The magic of value-based methods lies in the Bellman Equation. It allows an agent to update its current knowledge based on future expectations. In the context of 2026 supply chain management, value-based RL is used to predict the “value” of holding inventory. A system might decide to take a short-term loss (higher storage costs) because the Q-value indicates a high probability of a supply shortage in the next month. This foresight is what separates RL-driven systems from traditional reactive inventory software.

Traditional Q-Learning fails when the number of possible states becomes too large to fit in a table. This is where Deep Q-Networks (DQN) come in. By using a Neural Network as a function approximator, the agent can “generalize” from its experiences. It doesn’t need to see every possible situation to know what to do; it can infer the best action based on similar past states.

A landmark case study for DQN involved Google’s DeepMind, which used the algorithm to master Atari games. However, in 2026, the application is much more serious. DQNs are currently used in autonomous underwater vehicles (AUVs) for deep-sea exploration. These agents use DQNs to navigate environments where light and pressure conditions are constantly changing, making it impossible to pre-program every potential obstacle. The network learns to identify “safe” patterns in the sensor data, allowing for autonomous decision-making in the world’s most hostile environments.

While value-based methods try to find the best actions indirectly by looking at rewards, Policy Gradient (PG) methods act directly on the policy itself. Instead of asking “How much reward will I get?”, the agent asks “How should I change my behavior to get more reward?”

Policy gradients are particularly useful when the action space is continuous—for example, the exact angle of a robotic arm or the precise flow rate in a chemical refinery. In 2026, PG methods are the standard for high-precision manufacturing. Statistics from the automotive industry show that RL systems using policy gradients have improved the precision of robotic welding by 14% compared to traditional PID controllers. This is because the RL agent can adapt to the microscopic wear and tear of the machinery in real-time, adjusting its “policy” to maintain perfect accuracy.

One of the most powerful paradigms in modern RL is the Actor-Critic method. This architecture combines the strengths of both value-based and policy-based methods. The “Actor” handles the behavior (policy), while the “Critic” evaluates the actions taken (value function).

The Critic observes the Actor’s performance and provides feedback: “That action was better than expected” or “That was a mistake.” This reduces the “variance” or instability often found in pure policy gradient methods. In 2026, Asynchronous Advantage Actor-Critic (A3C) and Proximal Policy Optimization (PPO) are the dominant algorithms in financial trading systems. These systems use the “Critic” to assess the risk of a market move, while the “Actor” executes trades. This duo-structure allows the system to remain aggressive in seeking profit while having a built-in “safety check” that understands market volatility.

Every decision-making system faces a fundamental conflict: Should I stay with the best option I know (Exploitation), or should I try something new that might be even better (Exploration)? If an agent only exploits, it might get stuck in a sub-optimal routine. If it only explores, it never gathers any actual reward.

In 2026, researchers use sophisticated methods like Upper Confidence Bound (UCB) and Thompson Sampling to manage this trade-off. In digital marketing, this is used for “Multi-Armed Bandit” problems. When a news site decides which headline to show you, it is using RL to balance exploitation (showing the headline that gets the most clicks) with exploration (testing new headlines to see if they perform better). Modern RL systems have moved toward “Curiosity-Driven Exploration,” where the agent is actually rewarded for finding parts of the environment it doesn’t understand yet.

Most famous RL algorithms are Model-Free, meaning they learn purely by trial and error without understanding the “physics” of their world. However, Model-Based RL involves the agent building an internal simulation of the environment.

A model-based agent can “think” before it acts. It can run thousands of simulations in its “head” to see the likely outcome of a decision before ever moving in the real world. This is crucial for systems where failure is expensive, such as autonomous aviation. In 2026, commercial drones use model-based RL to predict how wind gusts will affect their flight path. By simulating the impact of the wind internally, the drone can make a decision to bank or slow down before the wind even hits, leading to a 30% increase in flight stability during adverse weather.

The most complex frontier of decision-making is when multiple agents must interact in the same space. In Multi-Agent RL (MARL), the environment is non-stationary because every time one agent learns and changes its behavior, the “world” changes for all the other agents.

MARL is the backbone of the 2026 autonomous traffic system. In a smart city, every self-driving car is an RL agent. If they only acted for their own reward (getting to the destination fastest), the result would be total gridlock. Through MARL, these cars learn “Cooperative Policies.” They learn that by slowing down to let another car merge, the “Global Reward” (total traffic flow) increases, which eventually benefits them too. This shift from “Egoistic RL” to “Collaborative RL” is what has finally made autonomous urban transport a reality in the mid-2020s.

One major hurdle for RL in decision-making has been the danger of the “learning phase.” You cannot let a medical AI “experiment” on live patients to see what works. Offline RL solves this by allowing agents to learn from historical data—thousands of hours of past doctor-patient interactions—without ever taking a risky action during the training.

In 2026, healthcare systems use Offline RL to determine the optimal dosage for chronic kidney disease treatments. By analyzing years of electronic health records, the RL agent identifies patterns in how patients responded to different dosages. It then creates a decision-making system that is safer and more effective than traditional “one-size-fits-all” guidelines. Statistics from 2025 clinical trials showed that RL-guided personalized dosing reduced adverse drug reactions by 19% across three major hospital networks.

The final frontier for RL methods is Reinforcement Learning from Human Feedback (RLHF). This is the method used to align large AI models with human values. Instead of a mathematical reward, a human tells the agent: “This decision was helpful” or “This decision was harmful.”

As we look toward 2030, the focus is on “Generalization”—creating RL agents that don’t just solve one task, but can take the logic of decision-making from one domain to another. A decision-making system that learns to manage a warehouse should be able to apply the basics of “efficiency” and “resource management” to a hospital or a data center with minimal retraining. We are moving from “Narrow RL” to “Agile RL,” where the machine’s ability to choose is as flexible as the human mind it seeks to emulate.

Reinforcement learning has redefined the architecture of decision-making systems. By shifting from static rules to dynamic learning, RL allows machines to navigate a world that is messy, unpredictable, and complex.

Reinforcement learning is not just a branch of AI; it is the fundamental science of how an intelligent entity makes a choice, learns from the consequence, and builds a better future through the power of iteration.